Problem

All right, here's your motivation: Your name is Lucas, you're an average developer who wants to create beautiful things you can be proud of. One day, you'll think about hitting your clients with a "computers for dummies" book.

No, forget that part. We'll improvise... just keep it kind of loosey-goosey. You want to create an form to allow clients to upload files and you need it done yesterday! ACTION.

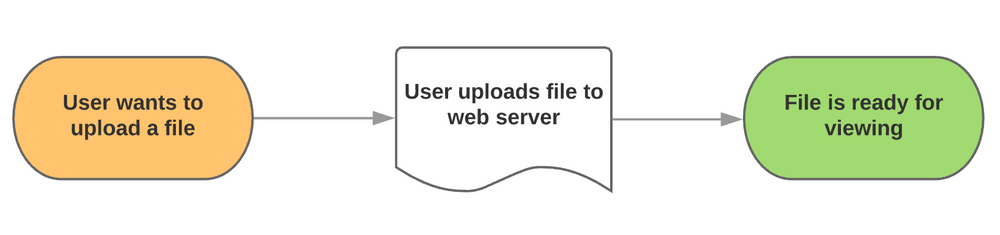

Most developers start out by doing something like this;

Uploads hit the server, probably in some web accessible directory like /uploads/ and figure everything is great.

Some might even learn about attack vectors and move the uploads directory to a non web accessible directory.

Then comes the day when you learn about S3. Storing files on someone else's computer? Sign me up.

So the easiest solution is often to copy files from your server to S3 after an upload.

But this is the wrong thing to do, now you're touching the file twice, paying for double the amount of bandwidth and on large files adding a decent amount of latency that any user would get sick of.

So, how do we fix it?

Assuming you have set up an S3 filesystem then the first step is to create an endpoint to generate a signed URL for the client to upload files to.

In this example I'm calling it `s3-url`.

Route::get('/s3-url', 'SignedS3UrlCreatorController@index');And the relevant controller logic.

class SignedS3UrlCreatorController extends Controller

{

public function index()

{

return response()->json([

'error' => false,

'url' => $this->get_amazon_url(request('name')),

'additionalData' => [

// Uploading many files and need a unique name? UUID it!

//'fileName' => Uuid::uuid4()->toString()

],

'code' => 200,

], 200);

}

private function get_amazon_url($name)

{

$s3 = Storage::disk('s3');

$client = $s3->getDriver()->getAdapter()->getClient();

$expiry = "+90 minutes";

$command = $client->getCommand('PutObject', [

'Bucket' => config('filesystems.disks.s3.bucket'),

'Key' => $name,

]);

return (string) $client->createPresignedRequest($command, $expiry)->getUri();

}

}This means that any GET request sending through a filename parameter generates a signed URL that anyone can use.

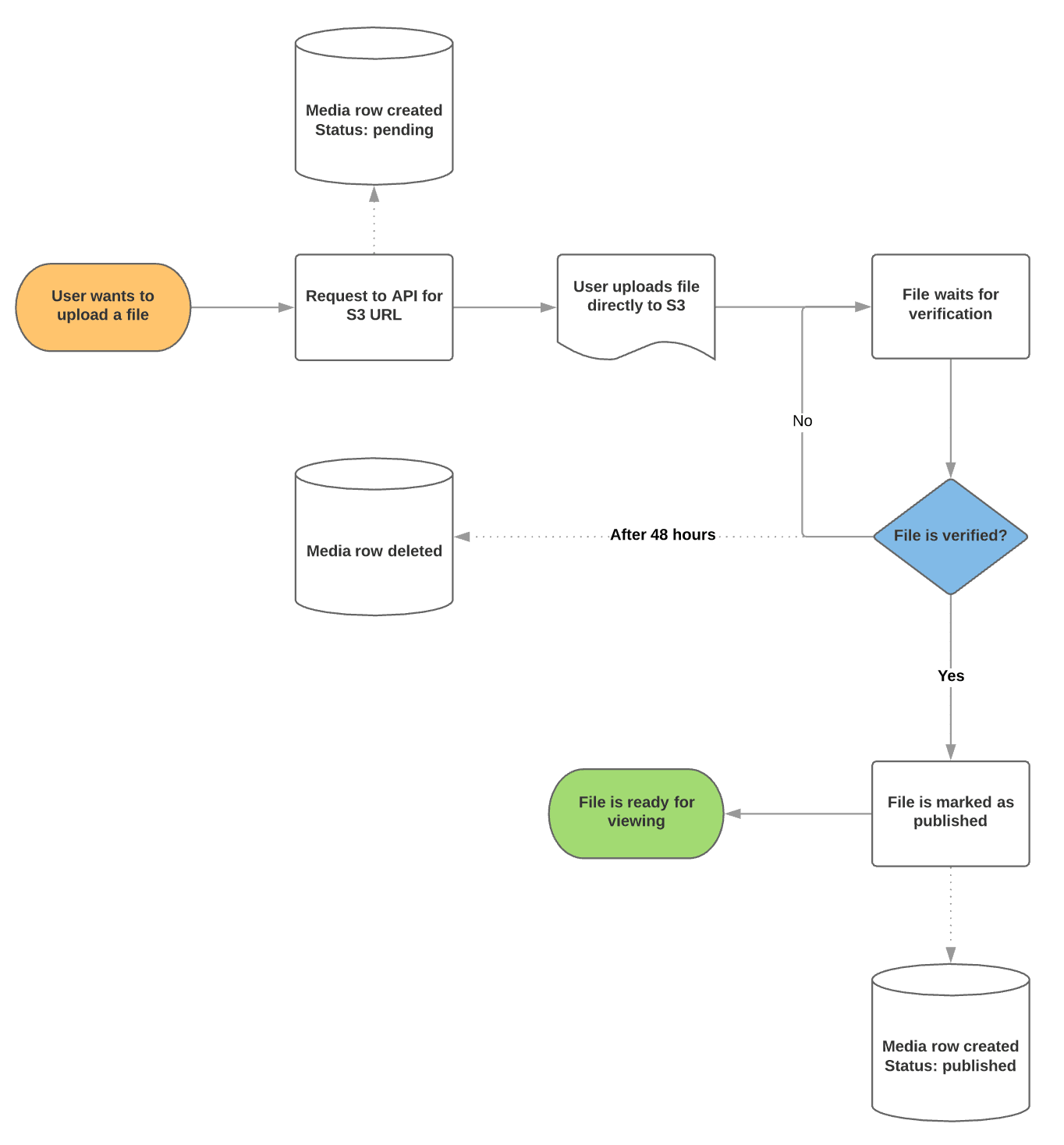

It's probably a good idea to keep track of these URLs and/or file names in a database just in case you want to query or remove them programmatically later on.

The second step is to use something like DropzoneJS.

DropzoneJS is an open source library that provides drag’n’drop file uploads with image previews.

Dropzone will find all form elements with the class dropzone, automatically attach itself, and upload files dropped into it to the specified action attribute.

The uploaded files can be handled just as if there would have been a regular html form.

<form action="/file-upload" class="dropzone">

<div class="fallback">

<input name="file" type="file" multiple />

</div>

</form>But we don't want to just handle it like a regular form, so we need to disable the auto discover function.

Dropzone.autoDiscover = false;

Then create a custom configuration that watches for files added to the queue, like so;

var dropzone = new Dropzone('#dropzone',{

url: '#',

method: 'put',

autoQueue: false,

autoProcessQueue: false,

init: function() {

/*

When a file is added to the queue

- pass it along to the signed url controller

- get the response json

- set the upload url based on the response

- add additional data (such as the uuid filename)

to a temporary parameter

- start the upload

*/

this.on('addedfile', function(file) {

fetch('/s3-url?&name='+file.name, {

method: 'get'

}).then(function (response) {

return response.json();

}).then(function (json) {

dropzone.options.url = json.url;

file.additionalData = json.additionalData;

dropzone.processFile(file);

});

});

/*

When uploading the file

- make sure to set the upload timeout to near unlimited

- add all the additional data to the request

*/

this.on('sending', function(file, xhr, formData) {

xhr.timeout = 99999999;

for (var field in file.additionalData) {

formData.append(field, file.additionalData[field]);

}

});

/*

Handle the success of an upload

*/

this.on('success', function(file) {

// Let the Laravel application know the file was uploaded successfully

});

},

sending: function(file, xhr) {

var _send = xhr.send;

xhr.send = function() {

_send.call(xhr, file);

};

},

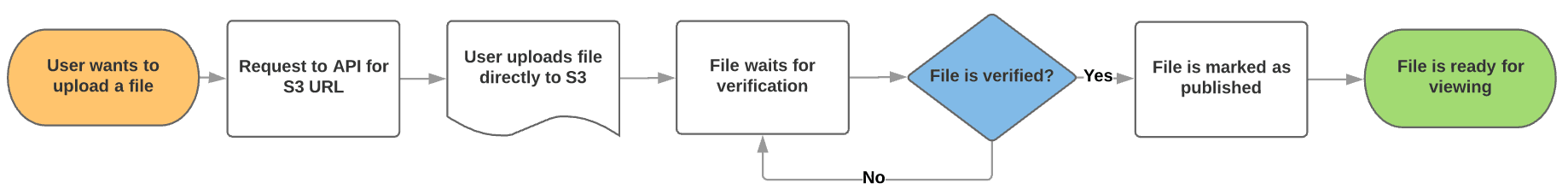

});This provides the following upload flow.

No double handling and secure S3 uploads, nice.

From here it's possible to expand the uploading and handling logic to update database records. But I'll leave that to you.

All source is available on GitHub for your viewing pleasure.

NB:

Don't forget the CORS config for your S3 bucket

<?xml version="1.0" encoding="UTF-8"?>

<CORSConfiguration xmlns="http://s3.amazonaws.com/doc/2006-03-01/">

<CORSRule>

<AllowedOrigin>*</AllowedOrigin>

<AllowedMethod>GET</AllowedMethod>

<AllowedMethod>PUT</AllowedMethod>

<AllowedMethod>POST</AllowedMethod>

<AllowedMethod>DELETE</AllowedMethod>

<MaxAgeSeconds>3000</MaxAgeSeconds>

<ExposeHeader>Access-Control-Allow-Origin</ExposeHeader>

<AllowedHeader>*</AllowedHeader>

</CORSRule>

</CORSConfiguration>